The Massachusetts Institute of Technology (MIT) is making underwater operations easier for human-machine teams, unveiling a set of algorithms to improve coordination and perception in challenging environments.

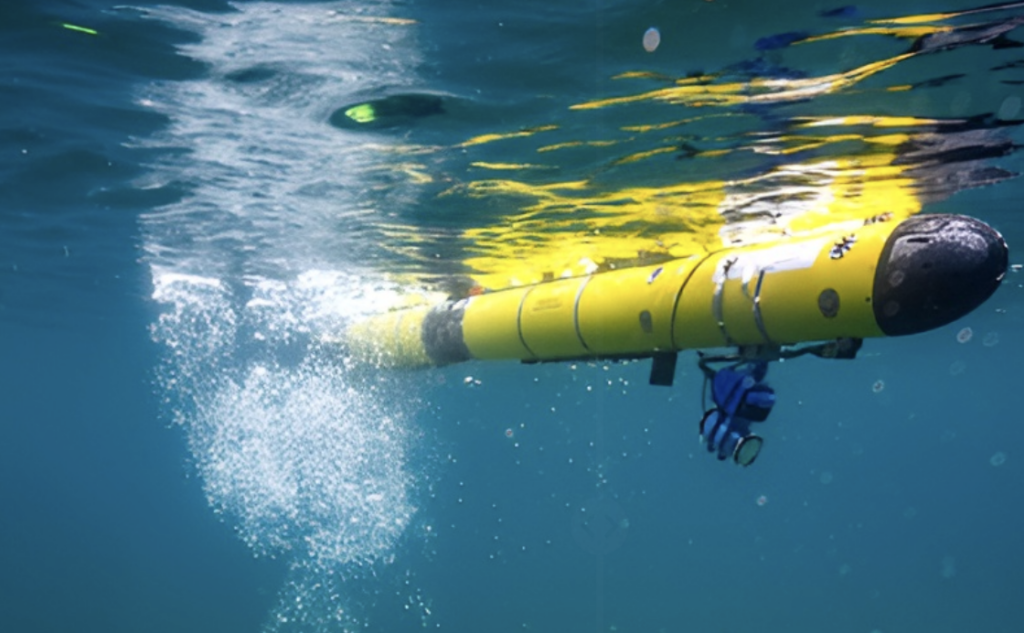

Building on foundational work from the MIT Marine Robotics Group, a team led by principal investigator Madeline Miller developed a navigation algorithm that tracks a diver’s position relative to an autonomous underwater vehicle (AUV).

“With the algorithm demonstrated by MIT, the vehicle only needed to calculate the distance, or range, to the diver at regular intervals to solve the optimization problem of estimating the positions of both the vehicle and diver over time,” Miller said.

By maintaining accurate position tracking between the diver and AUV, the algorithm helps enable coordinated movement and real-time adjustments in low-visibility environments.

AI Classifier

For underwater perception, the team also developed an algorithm that processes optical and sonar data collected during missions and classifies what it sees.

It is also designed to seek human input for objects that cannot be confidently classified.

“The idea is for the classifier to pass along some information — say, a bounding box around an image — to the diver and indicate, ‘I think this is a tire, but I’m not sure. What do you think?’ Then, the diver can respond, ‘Yes, you’ve got it right, or no, look over here in the image to improve your classification’,” Miller explained.

This supports human-machine teaming by combining the AUV’s real-time sensing with diver judgment, improving classification accuracy in uncertain cases during missions.

The Problem It Solves

Underwater human-robot teams are currently limited by communication constraints and the difficulty of processing information in real time below the surface.

While AUVs can process data quickly, they lack the dexterity required for complex physical tasks such as infrastructure repair.

In low-light deep-sea environments, conventional sensors also tend to produce limited imagery, often restricting data quality and slowing the development of training datasets for underwater AI systems.

Miller’s team aims to bridge these gaps by combining human judgment with robotic perception and autonomy in a coordinated system.

Early testing at the Great Lakes Research Center included a tube-shaped prototype designed to facilitate direct communication between divers and AUVs.

Miller is now seeking industry partnerships to further refine the technologies, with interest from both military and commercial sectors.

“Ultimately, we want to devise solutions for navigation and perception in expeditionary environments,” she said.